What is Context Engineering?

Context Engineering is the deliberate design and orchestration of all information sent into a language model’s context window—not just the prompt, but also memory, retrieved knowledge, prior outputs, tool descriptions, and more. It aims to ensure the model has everything it needs at inference time to perform optimally.

In short, it’s about curating the most relevant, structured, and timely context to guide the LLM’s behavior effectively.

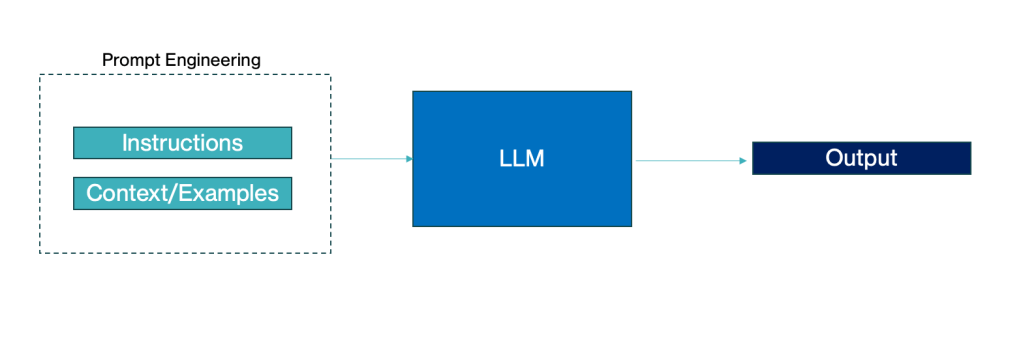

How Context Engineering is different from Prompt Engineering?

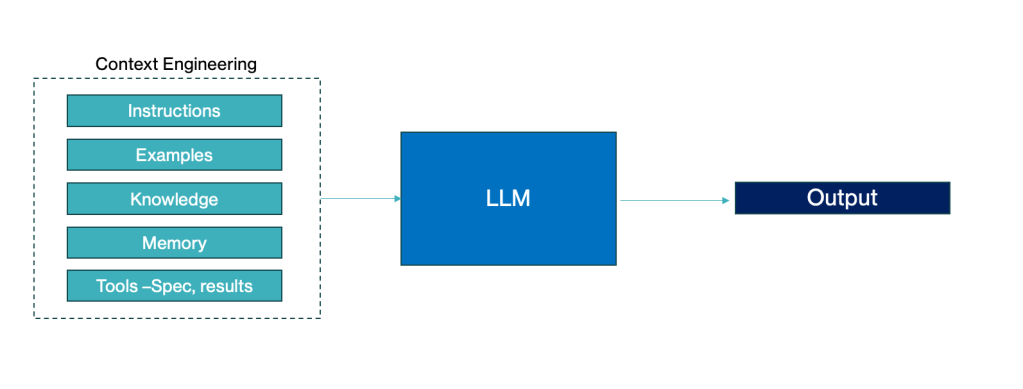

Prompt Engineering is a subset of Context Engineering. While prompt engineering focuses on crafting the right instruction or phrasing to elicit a desired response from a language model, context engineering goes broader. It is the discipline of deciding what information to place in the context window — including prompts, examples, knowledge, memory, user history, and tool outputs — to drive better AI behavior.

Prompt Engineering

Context Engineering

Why Context Engineering becomes important now?

- LLMs are capable of more: As models grow in power, they can perform multi-step tasks, call tools, retrieve documents—if they’re set up right.

- Emergence of Agents: Tools like LangChain, LlamaIndex, and ReAct agents rely on rich, dynamic context to plan and act.

- Longer Context Windows: With 100k+ token limits, managing what goes into context becomes a design problem.

- Multi-modal and multi-turn systems: These require continuity and relevance across inputs—enabled by engineered context.

What does Context Include?

From the above diagram and best practices:

- Instructions: The task, expected format, or role-based framing

- Examples: Few-shot examples that help the model generalize

- Knowledge: Retrieved facts, documents, or outputs from RAG systems

- Memory: Persistent facts or conversation history

- Tool Specs & Outputs: What tools the agent has access to and what they returned

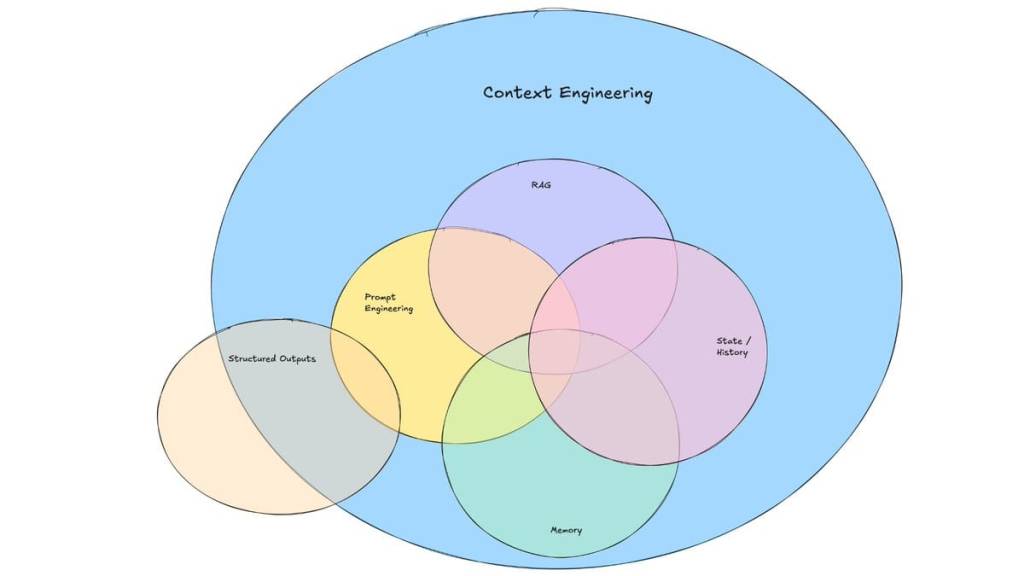

Is Context Engineering the same as RAG?

Not quite.

- RAG (Retrieval-Augmented Generation) is a specific technique for adding external knowledge to the context window—usually by retrieving top-k passages from a vector store.

- Context Engineering is a broader discipline that includes RAG, but also other techniques like:

- Tool call orchestration

- Working memory (e.g., agent scratchpads)

- History summarization

- Chain-of-thought engineering

- Adaptive prompt templates

Image Credit – LangChain Blog

What does a Context Engineering pipeline look like?

- Determine task type (static QA, codegen, agent action, etc.)

- Identify relevant context types (e.g., tool specs, documents, examples)

- Fetch, rank, filter, and format each context source

- Assemble into a coherent input prompt

- Inject into model via API call

- Track and refine using feedback loops (e.g., error cases or token usage)

Overall, Context engineering is the strategic design of what goes into a language model’s context window to guide intelligent behavior. It goes beyond prompt engineering by incorporating memory, knowledge, and tool outputs. This practice is critical for building advanced, agentic AI systems.

Leave a comment