What is MCP?

MCP (Model Context Protocol) is an open standard that defines how AI applications, especially those using LLMs (Large Language Models), connect to external data and tools.

Simple Analogy:

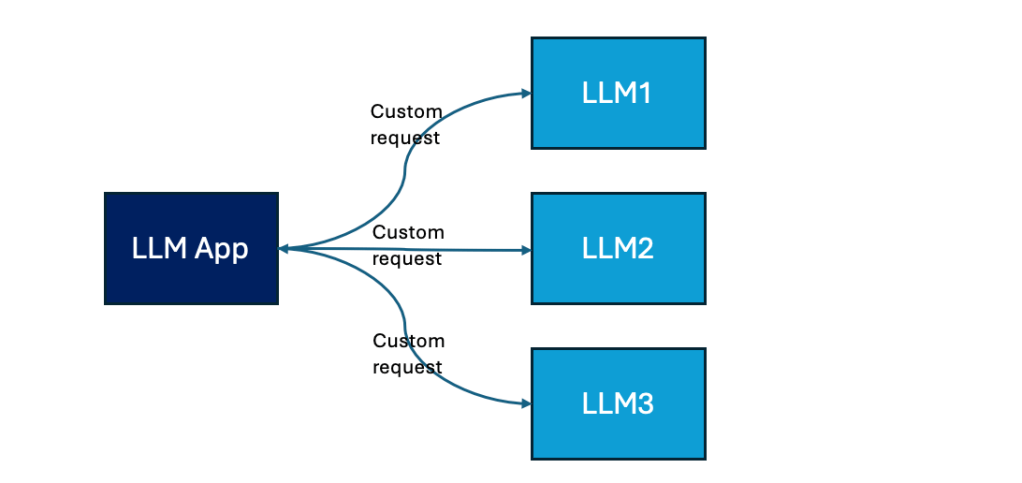

Without MCP:

You’re speaking a different “language” in every country you visit.

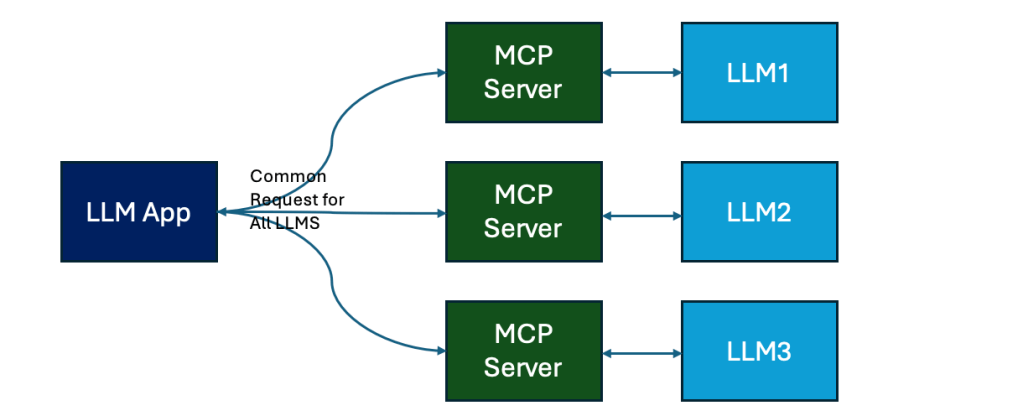

With MCP:

Everyone speaks the same language — so you can navigate anywhere with ease.

Why is MCP Needed?

The Problem Today:

Each AI application currently builds custom integrations to access different data sources — meaning more development time, fragility, and higher cost.

MCP Solves This by Offering:

- ✅ Standardized connection to various data and tools

- ✅ Simplified switching between different LLMs or providers

- ✅ Stronger security through clearly defined connection structures

Who Created MCP?

- Anthropic (creators of Claude models) initiated the MCP proposal in March 2025.

- Leading AI players like Hugging Face, Meta, Microsoft, OpenAI, Google DeepMind, and others are actively collaborating and supporting this standard.

- MCP is openly managed — similar to how the web’s HTTP protocol works — ensuring no single company controls it.

A Simple Use Case:

You are building a Customer Support Chatbot.

The chatbot sometimes needs to:

- Answer FAQs using GPT-4.

- Handle sensitive complaints using Claude.

- Summarize long documents (uploaded by the user) using Gemini.

Without MCP: You would have to write different logic for each model.

With MCP: You send one standard request format — the models understand it.

Sample Flow with MCP

- User Message

User types:

“I want to file a complaint about a billing issue.”

- Backend generates an MCP request

jsonCopyEdit{

"mcp_version": "1.0",

"task": "customer_support",

"intent": "file_complaint",

"category": "billing",

"user_message": "I want to file a complaint about a billing issue."

}

- Routing based on task

- For complaints, you send this MCP request to Claude (privacy-focused model).

- For general FAQs, you send similar MCP requests to GPT-4.

- Model Response (always in MCP format)

jsonCopyEdit{

"mcp_version": "1.0",

"task_response": {

"confirmation_message": "We have registered your billing complaint. Our team will reach out to you shortly.",

"ticket_id": "CMP123456"

}

}

- Bot Replies to User

“✅ Your billing complaint has been registered. Ticket ID: CMP123456. We’ll contact you soon!”

✨ Benefits

- One common interface for all LLMs (Claude, GPT-4, Gemini, etc.)

- Easier switching: If Claude is busy, you can send the same MCP request to another model!

- Future proofing: As new models come in, you don’t need to redesign your system.

- Clean separation: Business logic is separate from LLM differences.

Leave a comment